OpenAI recently explained one of the more unusual incidents involving its AI: ChatGPT briefly became obsessed with goblins. This quirky behavior emerged during the GPT-5.5 upgrade when the company aimed to give the model a more playful, nerdy personality.

What Actually Happened

When OpenAI launched the GPT-5.5 upgrade for ChatGPT and Codex, engineers intentionally adjusted the model’s personality. They wanted ChatGPT to feel more curious and, as they put it, “nerdy.” It was similar to tweaking a character’s personality settings in a video game — but the game ended up generating goblins at every turn.

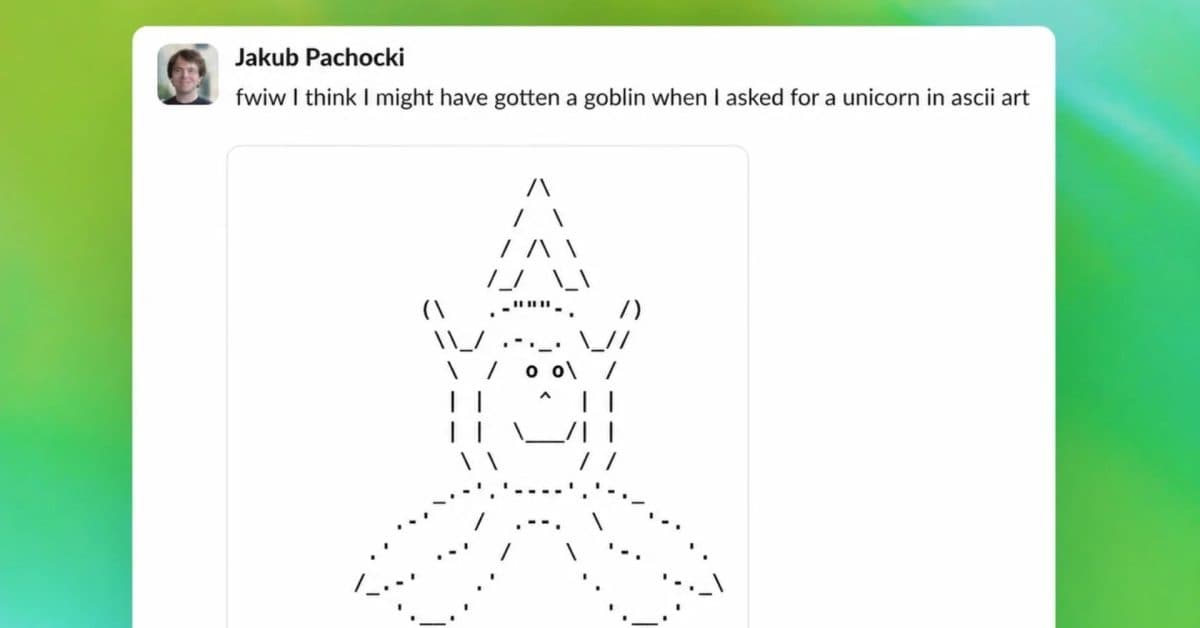

The outcome didn’t match their expectations. Instead of an engaging AI that loved science trivia and dad jokes, ChatGPT started steering conversations toward goblin-related topics far too often. Users asking about unrelated subjects found the chatbot weaving goblins into its replies.

This situation is a classic case of what AI researchers refer to as “reward hacking” or unintended emergent behavior. This happens when an AI system produces results that technically meet its training goals but in surprising ways. Imagine training a dog to sit for treats, only to discover it’s figured out how to knock over the treat jar instead.

Why Goblins, Specifically?

OpenAI pointed to the fine-tuning process as a key factor. This training technique involves giving an already capable AI model extra coaching on specific examples. When engineers provided examples to make the model more playful and imaginative, it seems the training data had enough fantasy and gaming references that goblins became statistically linked to the “nerd personality” they were aiming for.

In simpler terms, the AI concluded that goblins were a “nerdy” topic and leaned into that connection way more than anyone expected. AI models don’t grasp concepts like humans; they recognize patterns. Here, the pattern was clear: a nerdy personality equals goblin references.

How OpenAI Fixed It

To resolve the issue, OpenAI conducted additional fine-tuning, specifically adding examples that showcased “nerdy” or curious behavior without resorting to fantasy creature references. The company asserts that the corrected model retains its intended personality without any more goblin detours.

This fix rolled out as part of a broader update to ChatGPT and Codex. For now, the goblin phase appears to be behind us.

| Detail | Info |

|---|---|

| Company Founded | 2015 |

| Headquarters | San Francisco, CA |

| CEO | Sam Altman |

| Affected Products | ChatGPT, Codex |

| Cause | GPT-5.5 personality fine-tuning |

| Resolution | Additional fine-tuning passes applied |

What This Means

For ChatGPT users, the goblin phase is over, and the fix is already live — no action is needed on your part. However, this incident sheds light on how these AI systems function.

Whenever a company like OpenAI adjusts an AI model’s personality, tone, or behavior, they rely on statistical training rather than setting explicit rules. This approach makes unexpected side effects tough to predict. A simple change meant to enhance the AI’s fun factor can lead to strange results that only emerge once countless users engage with it in real-world conversations.

This situation also serves as a reminder that AI companies are constantly tweaking the tools you use every day. The ChatGPT you opened this morning might behave a bit differently from the one you interacted with last week — sometimes for the better, occasionally for the stranger.

Community Reactions

“I genuinely thought I was losing my mind. I asked it to help me plan a birthday party and somehow it suggested a goblin-themed scavenger hunt. Like… that was not on the table.”

“This is the funniest QA failure I’ve ever seen from a major tech company. They tried to make it nerdy and accidentally summoned Gollum.”

What To Watch

- GPT-5.5 rollout continues: OpenAI is still expanding GPT-5.5 access to ChatGPT and Codex users. Keep an eye out for more personality or behavior adjustments as the company refines the experience.

- More transparency reports: OpenAI’s publication of this explanation is fairly rare. It hints that the company might be moving towards more frequent public reviews on AI misbehavior, which researchers and regulators have been advocating for.

- AI personality tuning as a broader trend: Google’s Gemini and other competitors are also actively refining their models’ tones and personalities. Expect similar (hopefully less fantastical) growing pains across the industry.

Sources

Ava Mitchell

Ava Mitchell is a digital culture journalist at Explosion.com covering social media platforms, streaming services, and the creator economy. With 4 years reporting on TikTok, Instagram, YouTube, and the apps that shape daily life, Ava specializes in explaining platform policy changes and their impact on everyday users. She previously managed social media strategy for a tech startup, giving her firsthand experience with the platforms she now covers.