This week, Meta confirmed it’s using artificial intelligence to analyze users’ physical traits, such as height and bone structure, visible in photos and videos. This helps identify and remove underage users from its platforms.

How the System Works

The AI system scans visual signals in uploaded content to gauge if a user might be younger than the minimum age requirement of 13. Picture a bouncer who doesn’t check IDs but looks at your wrists and posture to estimate your age. Right now, Meta says this technology is available in select countries, with plans for a broader global rollout soon.

When we talk about bone structure analysis, it means the AI examines physical proportions seen in photos and videos. This includes factors like limb length in relation to body height, which typically vary between children and adults. It’s a similar concept to some medical imaging tools, but applied to social media content.

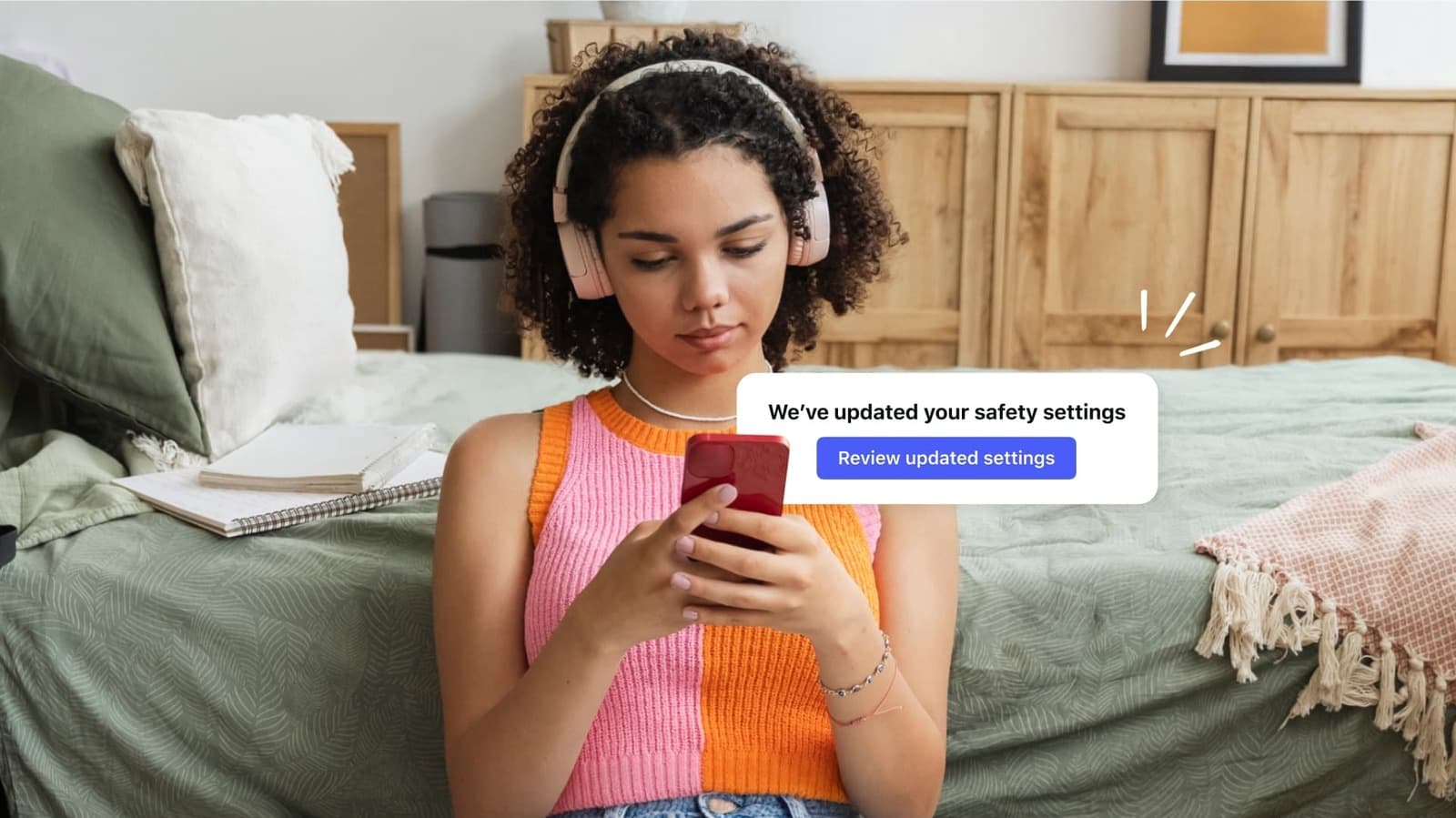

Meta is also expanding its Teen Account system, which adds restrictions for younger users, from Instagram to Facebook. Teen Accounts, which limit direct messages, content recommendations, and screen time for users under 18, were initially an Instagram-only feature launched in 2024.

Why Meta Is Doing This Now

Meta faces ongoing pressure from lawmakers, regulators, and parents regarding child safety on its platforms. Several U.S. states have passed or are working on legislation that demands stricter age verification for social media users. The EU’s Digital Services Act also requires large platforms to protect minors.

Traditional age verification methods, like having users enter their birthdate, can easily be bypassed. A 12-year-old can easily input a fake birth year. Meta’s approach aims to go further by using signals that users haven’t explicitly provided.

What This Means for Everyday Users

For most adult users, the impact should be minimal. The system targets accounts that seem to belong to kids, not every adult’s selfies. However, this technology raises important questions about how Meta analyzes the photos and videos you share, and what other conclusions they might draw from that visual data in the future.

If you’re a parent, it’s good to know about the Teen Account expansion to Facebook. This means if your teenager has a Facebook account, Meta’s age-detection and restriction tools apply there too, not just on Instagram.

There’s also a concern about false positives. Adults who appear younger could be flagged by the system. Meta hasn’t detailed how the appeal or review process will work if an adult’s account gets wrongly restricted.

| Meta — Company Snapshot | |

|---|---|

| Stock (META) | $603.94 (-0.17%) |

| CEO | Mark Zuckerberg |

| Founded | 2004 |

| Headquarters | Menlo Park, CA |

| Sector | Social Media |

| Teen Account expansion | Instagram → Facebook |

| Rollout status | Select countries, broader rollout planned |

The Privacy Trade-Off

This is where things get tricky. Using AI to analyze users’ bodies, even indirectly through their posts, goes well beyond simply asking for a birthdate or requiring a government ID. Meta is essentially adapting its content-scanning systems into age-detection tools by interpreting biological signals from your photos.

Privacy advocates have long raised alarms about “biometric inference,” where a platform makes personal conclusions based on observed physical traits instead of information you willingly provided. Bone structure and height estimation fall into this category.

So far, Meta hasn’t released detailed information on accuracy rates, error margins, or the specific types of images that trigger this analysis.

Community Reaction

“So Meta is literally scanning your body in photos now to decide if you’re allowed to use the app. That’s insane. I get that protecting kids matters but this feels like a line crossed.”

“Honestly, if it keeps kids off these apps, I’m for it. Every other method has failed. The real question is what else they’re doing with that analysis.”

What To Watch

- Global rollout timeline: Meta hasn’t provided a specific date for when the bone structure AI system will expand beyond its current test countries. Keep an eye out for announcements related to upcoming EU regulatory deadlines.

- Legislative response: Ongoing U.S. congressional hearings on child safety and social media in 2026 will likely discuss this new system. Lawmakers will consider whether AI-based age detection is sufficient or if mandatory ID verification is necessary.

- Privacy challenges: With Meta’s ongoing legal issues, including a separate 2026 copyright lawsuit from publishers regarding AI training data, privacy advocates and possibly regulators will closely examine the biometric implications of this system.

- Accuracy data: If Meta shares technical details on how often the system wrongly flags adult users, that will be crucial to monitor.

Sources: TechCrunch | Engadget

Ava Mitchell

Ava Mitchell is a digital culture journalist at Explosion.com covering social media platforms, streaming services, and the creator economy. With 4 years reporting on TikTok, Instagram, YouTube, and the apps that shape daily life, Ava specializes in explaining platform policy changes and their impact on everyday users. She previously managed social media strategy for a tech startup, giving her firsthand experience with the platforms she now covers.