Google is gearing up to make smart glasses a key part of its future. The upcoming Google I/O 2026 is set to launch a new wave of AI-powered eyewear in collaboration with various hardware partners.

| Ticker | GOOGL |

|---|---|

| Stock Price | $400.71 (+0.66%) |

| CEO | Sundar Pichai |

| Headquarters | Mountain View, CA |

| Founded | 1998 |

| Sector | Big Tech |

Why Smart Glasses, Why Now?

A decade ago, Google Glass, the company’s first wearable computing eyewear, quickly became a cultural joke after its 2013 release. The cameras made many uncomfortable, battery life was limited, and everyday consumers found the use cases unappealing. By 2015, Google quietly discontinued the consumer version.

However, the smart glasses category persisted, thanks to Meta’s ongoing efforts. The Ray-Ban Meta glasses, which allow users to take photos, listen to music, and now interact with an AI assistant, have found a solid audience. This success seems to have rekindled Google’s interest in the market, and now the company is teaming up with partners.

According to CNET, Google I/O 2026 will likely feature announcements about a variety of smart glasses from companies beyond Meta. This suggests Google is focused on building a broader ecosystem instead of trying to dominate the entire market.

What Google Is Actually Building

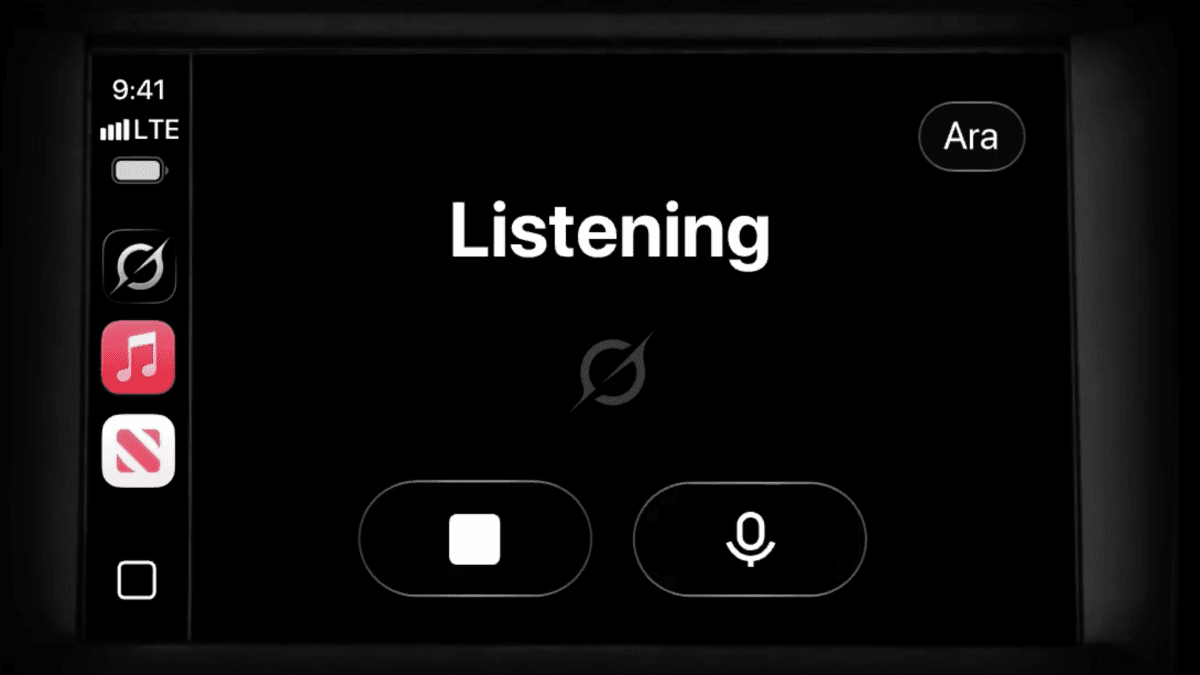

This time around, the key component is Gemini, Google’s AI model. Think of it as something similar to ChatGPT, but seamlessly integrated into Google’s products. The new generation of AI glasses is designed to listen, understand context, and respond — making it feel more like having a hands-free assistant rather than wearing a mini computer.

This approach is similar to what Meta has done with its Ray-Ban glasses: keep the design looking normal, remove the screen, and let the AI handle tasks through audio. Google’s advantage lies in Gemini’s deep integration with essential services like Search, Maps, Gmail, and the wider Google ecosystem. Hundreds of millions of people already depend on these services daily.

Imagine this: instead of pulling out your phone for directions or to translate a sign, you just look at something and ask. The glasses will see what you see, hear what you say, and respond directly in your ear.

The Partner Play

One interesting aspect leading into I/O is Google’s apparent shift toward being a platform provider instead of just a hardware manufacturer. This opens the door for other eyewear brands, including well-known fashion names, to release their own AI-powered frames that run on Google’s software.

This strategy makes more sense than going solo. Google has the AI and software expertise without the need to focus on manufacturing the frames. If a trusted brand for sunglasses or prescription lenses introduces a pair running Gemini, it becomes a much easier sell to consumers.

Mashable’s Google I/O preview highlights smart glasses alongside Gemini AI updates and Android changes as the top things to watch at this year’s conference.

What This Means for Everyday Users

If Google and its partners nail this, smart glasses could move beyond being a novelty to becoming genuinely useful. Here’s what that might look like:

- Navigation without looking at your phone. Get walking directions spoken into your ear while you focus on where you’re going.

- Real-time translation. Hear someone speaking another language and receive an instant audio translation.

- Hands-free search. Ask questions while cooking, driving, or exercising without needing to touch a screen.

- Calendar and email at a glance. Quick audio summaries of your schedule or inbox updates.

Of course, challenges remain, including battery life, pricing, and whether the AI will be reliable enough in real-time situations. If a glasses assistant mishears you or gives wrong directions, it could be more than just annoying; it might lead to some embarrassing or disruptive moments.

Privacy will also be a hot topic. Cameras built into glasses worn all day in public places raise the same concerns that derailed Google Glass initially. How Google tackles these issues, both technically and in its messaging, will be crucial for how the public receives these glasses.

Community Reactions

“The Ray-Ban Metas proved people will actually wear these if they look normal. Google has way better AI than Meta. This combo could actually be it.”

“I’ll believe it when I see a price tag. Google Glass was $1,500. If these are $500+, most people aren’t buying them.”

What To Watch

- Google I/O 2026 is the next big event to watch — expect the keynote to outline the smart glasses strategy, potential hardware partners, and details about Gemini integration.

- Pricing and availability announcements will show whether this product targets early adopters or aims for a wider audience from the start.

- Partner reveals — which eyewear brands Google collaborates with will indicate how serious they are about building this ecosystem.

- Meta’s response — with a head start in the Ray-Ban glasses, Meta won’t likely stay quiet. Any Google announcement could ramp up competition in this space.

Sources: CNET, CNET Mobile, Mashable

Daniel Park

Daniel Park covers AI, cloud infrastructure, and enterprise software for Explosion.com. A former software engineer who transitioned to technology journalism 5 years ago, Daniel brings technical depth to his reporting on artificial intelligence, startup funding rounds, and the companies building the future of computing. He breaks down complex AI developments and business strategies into clear, actionable insights for readers who want to understand how technology is reshaping industries.