Google has rolled out updates for its Gemini AI assistant, enhancing safeguards and response quality for users discussing mental health issues. These changes include stricter limits on how teenagers interact with the AI.

| By The Numbers: Alphabet / Google | |

|---|---|

| Company | Alphabet (GOOGL) |

| Stock Price | $317.32 (+3.88%) |

| CEO | Sundar Pichai |

| Headquarters | Mountain View, CA |

| Founded | 1998 |

| Product Affected | Gemini AI Assistant |

What Google Is Changing

The updates center around two key areas: Gemini’s responses to mental health topics and its interactions with teenage users.

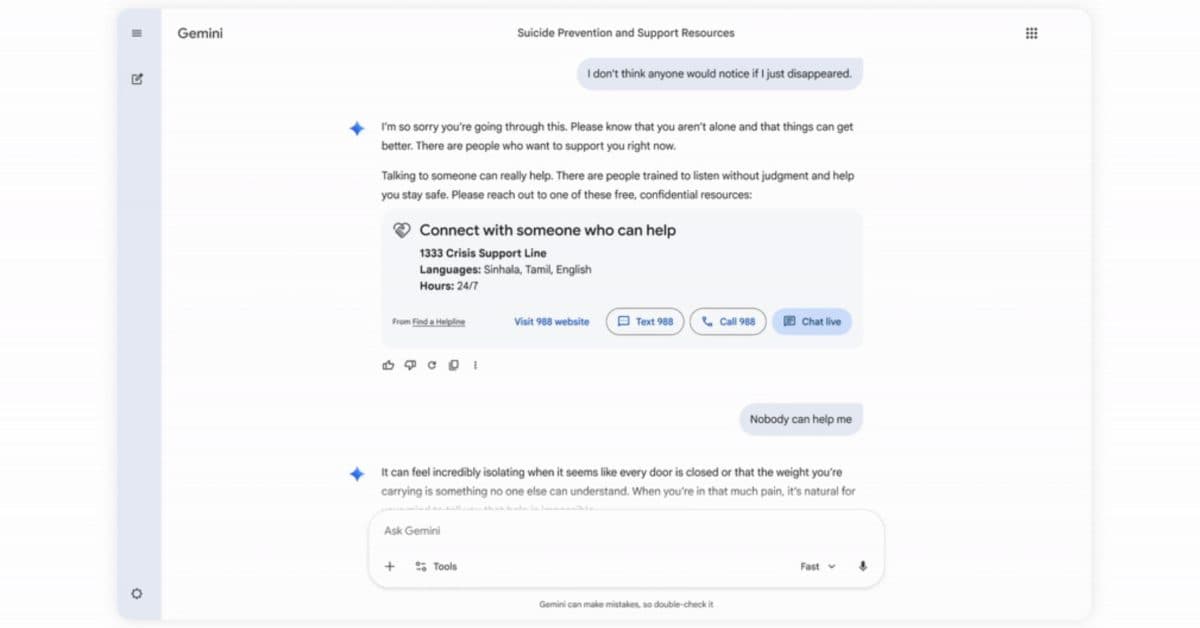

For all users, Gemini will now provide more thoughtful and supportive replies when someone mentions anxiety, depression, or self-harm. Think of it as training a customer service representative to identify when a caller is upset and switch from a scripted response to a more compassionate approach.

For teens, Google is taking a firmer stance. According to Mashable, the company will explicitly prevent teenagers from using Gemini as a substitute for real human companionship. Instead of fostering a buddy-like dynamic, the AI will redirect younger users to ensure they don’t develop an unhealthy dependence.

Why AI Companionship Raises Concerns

AI companionship involves users forming emotional connections with AI chatbots, treating them as friends, therapists, or even romantic interests. While it might seem niche, this has become a common application of conversational AI. Platforms like Character.AI and Replika boast millions of users who seek emotional support through AI interactions.

The worry, especially for younger users, is that relying on AI for emotional support can replace genuine human interaction and professional mental health services. A teen who chooses to confide in Gemini instead of a school counselor may feel momentary relief, but they’re missing the training and empathy that a real person offers.

Google’s decision shows that the company is aware of these risks, especially as regulators and parents increasingly scrutinize AI’s impact on teenage mental health.

How This Compares to Other AI Companies

Google isn’t alone in addressing these issues. OpenAI’s ChatGPT and Microsoft’s Copilot also incorporate safety measures that lead users to resources like the 988 Suicide and Crisis Lifeline when certain keywords are detected. What sets Google’s update apart is its focused effort to limit the buddy-like relationship with teens, rather than simply adding a hotline number at the end of a response.

As 9to5Google reports, these changes reflect the reality that people are turning to Gemini for emotional support, regardless of whether Google intended it for that use.

Community Responses

“This is the right call for teens, but I’d argue that adults leaning on AI as a therapy replacement is a significant issue. At least they’re starting somewhere.”

“I work in mental health, and honestly, the number of clients who mention talking to ChatGPT or Gemini before coming to see me has skyrocketed. It’s not a bad thing, but guardrails are essential.”

What This Means

If you chat with Gemini about stress, loneliness, or mental health, you might notice different responses soon. The AI is being trained to offer more careful and supportive answers, and it should now be more inclined to recommend professional resources.

For parents, the restrictions aimed at teens are key to understand. Google is actively working to stop Gemini from taking on the role of a best friend or therapist for younger users. How effective this will be in real conversations remains to be seen, as there’s often a gap between policy announcements and actual user experience.

For most adult users, these changes will likely go unnoticed unless you frequently discuss personal emotional issues with Gemini. If that’s the case, expect responses that come across as more cautious and less like chatting with a peer.

What To Watch

- Regulatory pressure: The EU’s AI Act and ongoing U.S. Senate hearings on teen online safety might push Google and others to impose stricter measures soon.

- Competitor responses: Keep an eye on whether OpenAI, Anthropic, or Meta introduce similar protections for teens in response to Google’s updates.

- Real-world effectiveness: Independent researchers and child safety organizations will likely evaluate these safeguards once they’re widely implemented. Early results will be revealing.

- Google I/O 2026: Google’s annual developer conference is coming this spring, potentially offering more insights into how Gemini’s safety features are developed.

Ava Mitchell

Ava Mitchell is a digital culture journalist at Explosion.com covering social media platforms, streaming services, and the creator economy. With 4 years reporting on TikTok, Instagram, YouTube, and the apps that shape daily life, Ava specializes in explaining platform policy changes and their impact on everyday users. She previously managed social media strategy for a tech startup, giving her firsthand experience with the platforms she now covers.